Tiny Startup Humiliates Nvidia and AMD: Runs 700B AI Models on a $0 Cloud Bill Using Decade-Old Chips

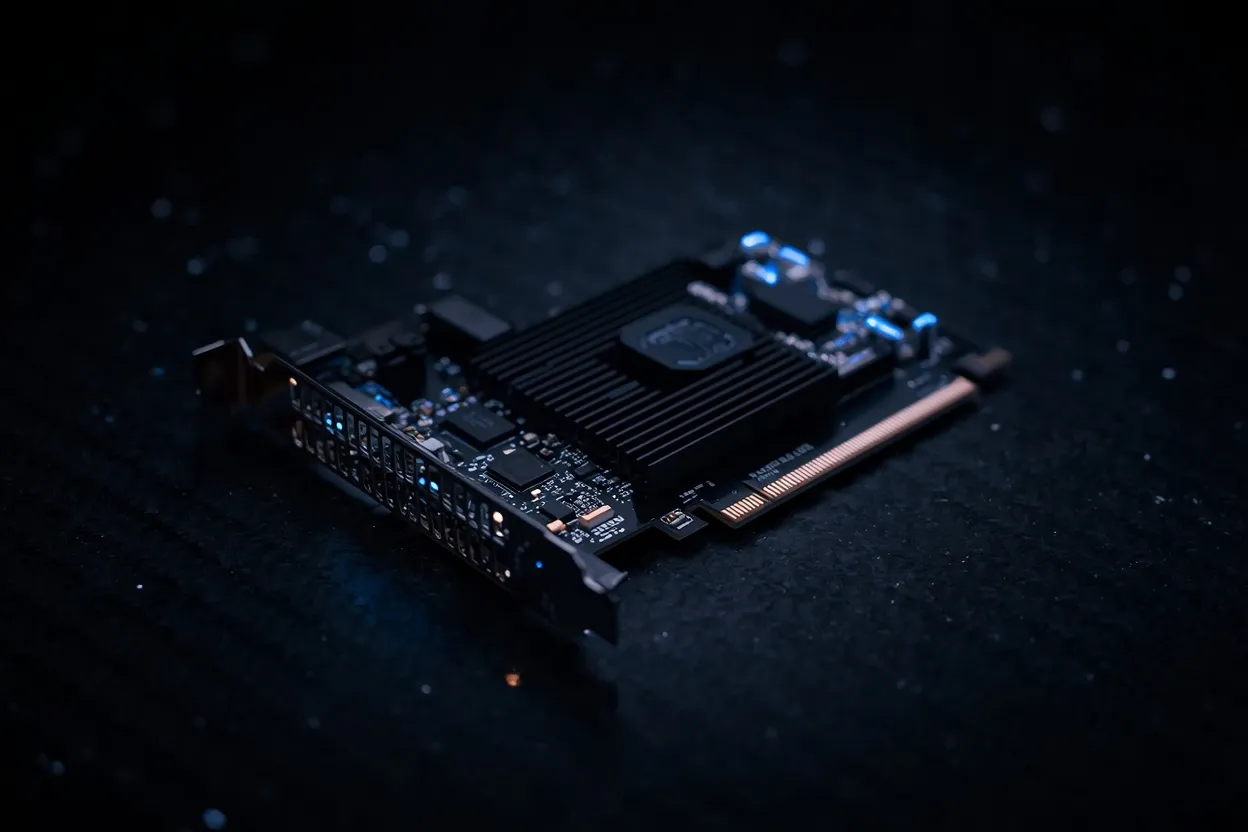

A Taiwanese company called Skymizer just announced a PCIe AI accelerator card that, on paper, does something Nvidia and AMD cards costing far more cannot: run a 700 billion parameter language model on a single device while drawing just 240 watts of power. The card is called the HTX301, and the specs alone are enough to make anyone in enterprise AI infrastructure sit up and pay attention.

But the real story here isn't just the performance claim. It's how Skymizer achieved it — using 28-nanometer chips that are a decade old and standard LPDDR4 and LPDDR5 memory instead of the expensive HBM solutions that define modern high-end AI hardware. The company will show the card at Computex, and that event will tell us whether these numbers are real or just well-crafted marketing.

Table of Contents

- What Is the Skymizer HTX301 and Why the AI World Is Stunned

- HTX301 Full Specs: 384GB Memory at Just 240 Watts

- The 28nm Trick: How Old Chip Architecture Outperforms Modern GPU Design

- HTX301 vs Nvidia RTX PRO 6000 vs AMD Instinct MI350P: Honest Numbers

- Which Businesses Should Actually Buy This Card

- Computex Reality Check: Will HTX301 Deliver What Skymizer Promises

- Frequently Asked Questions

What Is the Skymizer HTX301 and Why the AI World Is Stunned

The HTX301 is built on Skymizer's HyperThought platform, which uses a next-generation LPU — language processing unit — architecture designed specifically for LLM inference workloads. Each card holds six HTX301 chips working in parallel and offers up to 384 GB of total memory. Skymizer claims the card delivers 30 tokens per second at 0.5 TOPS across 100 GB per second of memory bandwidth.

The stunner isn't the raw throughput number. It's the power figure. Nvidia's RTX PRO 6000 Blackwell pulls roughly 600 watts. AMD's Instinct MI350P draws considerably more than the HTX301 as well. Skymizer's card claims to do comparable LLM inference work at 240 watts — less than half of what the Nvidia card requires. For data centers where power costs and cooling infrastructure are major operational expenses, that gap is significant.

Honestly, the skeptic in me wants to see Computex numbers before calling this confirmed. Startup claims without independent benchmarks are common in this space. But the architecture at least has a coherent explanation for why it works, which separates it from pure vaporware.

HTX301 Full Specs: 384GB Memory at Just 240 Watts

The memory configuration is where the HTX301 makes its most unusual choice. Instead of HBM3E — the high-bandwidth memory used in AMD's Instinct MI350P — or GDDR solutions, Skymizer built the card around standard LPDDR4 and LPDDR5. These are the same memory types found in laptops and smartphones. Using them in an AI accelerator is unconventional, but it directly explains the low power draw and lower cost structure.

The tradeoff is bandwidth. HBM3E in the AMD MI350P delivers dramatically higher memory bandwidth than LPDDR5. Skymizer compensates through compression. The card applies efficient compression to both model weights and KV cache, which reduces the effective memory bandwidth requirement for LLM inference. According to Skymizer, this compression approach outperforms unoptimized llama.cpp by 9 to 17.8 percent on the same tasks.

The card fits into a standard PCIe slot and works in any air-cooled server without modifications to power delivery or cooling infrastructure. That matters because upgrading a data center for liquid cooling or higher-amperage power circuits is expensive and slow.

Also Read

More Tech stories →The 28nm Trick: How Old Chip Architecture Outperforms Modern GPU Design

Using a 28nm process node in 2025 sounds like a step backward. Modern AI chips from Nvidia and AMD use 4nm or 3nm nodes, which pack far more transistors into the same die area and achieve higher performance per watt at peak throughput. So why does 28nm work here?

The answer is workload specificity. General-purpose GPUs are designed to handle everything — gaming, rendering, training, inference, video encoding. That flexibility requires complex silicon. The HTX301 is not general-purpose. It is designed exclusively for LLM inference, and specifically for the memory-bandwidth-bound nature of that workload. A specialized architecture can extract efficiency that a general GPU cannot, even on older silicon.

The 28nm node also has practical advantages: mature manufacturing with high yield rates, lower cost per wafer, and availability from multiple foundries. Skymizer isn't competing with TSMC's leading-edge allocation alongside Nvidia and Apple. They're using capacity that is abundant and cheap. That feeds directly into a lower card price, though Skymizer hasn't announced pricing yet.

HTX301 vs Nvidia RTX PRO 6000 vs AMD Instinct MI350P: Honest Numbers

Here's what the published specs actually say, without inventing numbers the source material doesn't provide:

| Card | Memory | Memory Type | Power Draw | Max LLM Size |

|---|---|---|---|---|

| Skymizer HTX301 | Up to 384 GB | LPDDR4 / LPDDR5 | 240W (claimed) | 700B params (claimed) |

| Nvidia RTX PRO 6000 | 96 GB | GDDR7 | ~600W | Limited by 96GB VRAM |

| AMD Instinct MI350P | 144 GB | HBM3E | Higher than HTX301 | Up to 4,600 TFLOPS MXFP4 |

The memory gap is the clearest differentiator. At 384 GB, the HTX301 can load models that simply don't fit in 96 GB or even 144 GB of VRAM — which is why Skymizer can claim 700B parameter capability. Running a 700B model on a single Nvidia RTX PRO 6000 isn't possible without model sharding across multiple cards. On the HTX301, Skymizer says it runs on one.

Key Takeaways

- The HTX301 packs 384 GB of LPDDR memory onto a single PCIe card — enough to load 700B parameter models that won't fit on Nvidia or AMD cards without multi-card setups.

- At 240W claimed power draw, it consumes less than half what Nvidia's RTX PRO 6000 requires, which reduces both electricity costs and cooling infrastructure needs.

- Skymizer uses 28nm chips specifically because LLM inference is memory-bandwidth-bound, not compute-bound — older specialized silicon can outperform newer general-purpose silicon on this specific task.

Which Businesses Should Actually Buy This Card

The HTX301 is not a training card. If your workload involves training large models from scratch or fine-tuning at scale, this card is not the right tool — that work is compute-intensive in ways the HTX301's architecture isn't optimized for. Nvidia's H100 and AMD's MI300X remain the standard for training.

But for inference — running a deployed model to answer queries, generate code, or handle domain-specific workflows — the HTX301's profile makes sense for specific buyers:

- Enterprises that want to run large open-source models like Llama 3 70B or larger on their own hardware without paying cloud API costs

- Organizations in regulated industries — healthcare, finance, legal — where sending data to a cloud API raises compliance issues

- Companies already running air-cooled server infrastructure who can't easily add liquid cooling for high-TDP cards

- Businesses deploying agentic AI workflows for coding assistance or automation where latency and cost per token matter more than peak FLOPS

The card is not for someone who needs GPU flexibility. If you also need to run CUDA-based workloads, render 3D graphics, or train models, you need an Nvidia or AMD card. The HTX301 does one thing and, if Skymizer's claims hold, does it efficiently.

Computex Reality Check: Will HTX301 Deliver What Skymizer Promises

Skymizer will preview the HTX301 at Computex, allowing independent journalists and analysts to see the card run live. That matters because the gap between announced specifications and real-world performance in AI accelerators has been wide before — from multiple startups that raised significant capital and then shipped products that underdelivered.

The specific number to watch is the 240 tokens per second on Llama2 7B claim. That's the benchmark Skymizer cited. Independent testers at Computex will have a straightforward test to run, and the result will either confirm or undermine the card's positioning. The 240W power draw figure is equally testable with a simple power meter.

If the numbers hold, the HTX301 changes the on-premises AI infrastructure calculation for a meaningful segment of the market. If they don't, Skymizer joins a long list of companies that announced impressive specs and shipped something less impressive. The answer comes at Computex.

Frequently Asked Questions

What is the Skymizer HTX301 PCIe AI accelerator card?

The Skymizer HTX301 is a PCIe AI accelerator built on Skymizer's HyperThought LPU platform. It contains six HTX301 chips and up to 384 GB of LPDDR4/LPDDR5 memory, and Skymizer claims it can run LLMs with up to 700 billion parameters on a single card at 240 watts of power consumption.

How does the HTX301 run 700 billion parameter LLMs on a single card?

The 384 GB memory capacity is large enough to load models that won't fit on standard GPU VRAM. Skymizer's HyperThought platform applies compression to model weights and KV cache, reducing effective bandwidth requirements. The result, per Skymizer's benchmarks, outperforms llama.cpp by 9 to 17.8 percent on the same inference tasks.

How does HTX301 compare to Nvidia RTX PRO 6000 in power consumption?

The HTX301 draws 240 watts according to Skymizer's claim. Nvidia's RTX PRO 6000 Blackwell draws roughly 600 watts — more than double — for comparable LLM inference work. AMD's Instinct MI350P also consumes more power than the HTX301, though it offers 4,600 peak TFLOPS at MXFP4 precision for compute-heavy workloads.

Does the HTX301 really use only 240 watts of power?

That is Skymizer's stated specification. It hasn't been independently verified yet. Computex is where journalists and analysts will run their own tests. The 240W figure is testable with basic equipment, so verification should happen quickly once the card is available for hands-on review.

What memory does the Skymizer HTX301 use instead of HBM?

The HTX301 uses LPDDR4 and LPDDR5 — the same memory technology used in laptops and smartphones. This is significantly cheaper and lower-power than HBM3E but also lower bandwidth. Skymizer compensates with compression techniques that reduce the bandwidth demand for LLM inference specifically.

When will the Skymizer HTX301 be available to buy?

No commercial release date or pricing has been announced. The card will be previewed at Computex 2025. Availability details should follow after the event, depending on how independent testing goes and whether Skymizer has manufacturing capacity lined up.

Can the HTX301 replace a GPU cluster for enterprise AI workloads?

For LLM inference specifically, Skymizer claims it can handle workloads that currently require multi-GPU clusters. For model training, fine-tuning, or CUDA-dependent workloads, it cannot — those still require Nvidia or AMD hardware. The HTX301 is an inference-only card optimized for one specific type of workload.

Conclusion

The Skymizer HTX301 makes a specific and testable claim: 700B parameter LLM inference on a single PCIe card at 240 watts, using 28nm chips and LPDDR memory that costs a fraction of HBM. If that claim survives Computex testing, it changes the math for enterprise AI teams that have been told the only path to large model inference is expensive cloud APIs or multi-GPU server clusters.

Computex is the moment of truth. The benchmarks Skymizer cited — 30 tokens per second, 240 tokens per second on Llama2 7B, 9 to 17.8 percent improvement over llama.cpp — are specific enough to verify quickly. Watch what independent reviewers report from the show floor. That will determine whether the HTX301 is a real option for on-premises AI infrastructure or just an impressive slide deck.

Leave a Comment